URL parameters can help a site track campaigns, sort products, and filter content, but they can also create a mess fast. One clean page can turn into several near-duplicates before we even notice.

For small business sites, that is where search visibility gets noisy. We do not need enterprise-level complexity to handle it, we just need clear rules for which parameter URLs belong in search, which ones should point back to a canonical page, and which ones should stay out of results. That is the practical side of URL parameters SEO in 2026.

How URL parameters change what search engines see

A parameter is the part of a URL that comes after the question mark. It usually looks like ?key=value, and it can keep growing with & between each extra value.

That sounds harmless, but search engines treat each version of a URL as a separate address unless we give them a better signal. A shopper may see the same product page with a different sort order or campaign tag. A crawler may see a new URL and decide it needs to check it too.

Google’s URL structure best practices still push us toward standard formatting, fewer parameters, and readable URLs. Search Engine Journal also covered Google’s revised parameter guidance after the June 2025 update, and the message is the same, keep the structure clean and predictable. The less confusion we create, the easier it is for Google to crawl and index the right page.

If the page looks the same to a shopper, it should usually look the same to Google.

That is the simplest way to think about it. The problem is not parameters themselves. The problem is letting them multiply without a plan.

UTMs are tracking labels, functional parameters change the page

This is where a lot of small sites mix things up. A UTM tag tells us where a visit came from. A functional parameter tells the browser what page version to show.

Those two jobs are not the same.

| Parameter type | Example | Default SEO treatment | When it might be indexable |

|---|---|---|---|

| UTM tracking | ?utm_source=newsletter&utm_medium=email | Canonicalize to the clean page | Almost never |

| Sorting | ?sort=price | Canonicalize, sometimes noindex | Rarely |

| Filtering | ?color=blue&size=medium | Usually canonicalize | Only if the filtered page is a true landing page |

| Pagination | ?page=2 | Usually keep out of standalone indexing | Only with a clear pagination setup |

| Internal search | ?q=blue+shoes | Stay out of search results | Never |

| Session or cart | ?sessionid=12345 | Stay out of search results | Never |

UTMs belong in campaign links. They help us measure traffic from email, ads, social posts, and affiliate placements. They should not create a new indexable version of the page.

Functional parameters are different. They often change the visible content, at least a little. A product filter, a sort order, or a page number can alter what the visitor sees. That is why functional parameters need a real SEO decision, not a guess.

For a small business site, the default rule is simple. If the parameter does not create a page with clear search value, we do not want it acting like a standalone URL in search.

Which parameter URLs should actually be indexable?

For most small business sites, the answer is fewer than people expect. Indexable parameter URLs should be the exception, not the habit.

Rare cases when indexing makes sense

A parameter URL can be indexable if it is the best version of a page that has unique value, a clear search intent, and a reason to rank on its own. Even then, we usually ask a second question first, can we turn that page into a clean URL instead?

That is often the better move. A clean path is easier to link to, easier to remember, and easier for crawlers to trust.

Pages we should canonicalize

Most parameter URLs belong here. If the page content is basically the same, but the URL changes because of UTM tags, sorting, filters, or minor presentation changes, we point the parameter version back to the clean version. That keeps signals on one main URL.

This is where our canonical tag SEO guide fits in. Canonical tags are a strong signal, not a magic wand, but they do help search engines understand which version we want to keep.

Pages that should stay out of search

Some URLs should not compete for rankings at all. Internal search pages, cart pages, checkout steps, account pages, session URLs, and temporary utility pages belong outside search results.

If we let those pages in, we waste crawl attention and blur the site structure. That is the opposite of what a small business site needs.

Canonical tags, noindex, robots.txt, and redirects all do different jobs

This is the part that clears up most confusion. These tools are related, but they are not interchangeable.

A canonical tag tells search engines which version of a page we prefer. It works well for duplicate or near-duplicate parameter URLs, especially UTM versions and filter versions that show the same core content.

A noindex tag tells search engines not to show a page in results. That is useful for internal search pages, cart pages, thank-you pages, and other utility URLs that should stay useful for visitors but out of search.

Robots.txt does something else. It controls crawling, not indexing. If we block a URL in robots.txt before search engines can see the page, they may not see the canonical tag or the noindex tag either. That is why robots.txt is best for crawl control, not as the first fix for index problems.

Redirects are for URLs that should not stay live at all. If a parameter version is simply an old or messy path that nobody should use, a 301 redirect to the clean URL is often the right answer. If we are cleaning up old parameter paths after a redesign or migration, site migration redirects matter too, because redirect chains can turn one problem into three.

Canonical for duplicates, noindex for pages that should exist but not rank, redirects for old URLs that should be retired.

That rule keeps the cleanup simple. We do not need to force every parameter through the same fix.

One more thing matters here. We no longer have the old Search Console parameter tool to manage this for us. The job now falls on page structure, internal linking, canonical tags, noindex rules, and good URL hygiene.

A simple parameter audit for a small business site

We do not need a giant crawl report to get started. We need a short, honest audit.

If we already keep a Google Search Console guide handy, this is where it pays off. Search Console shows us what Google is seeing, what it is indexing, and where parameter URLs are sneaking in.

- Find the parameter URLs that exist right now

Start with Google Search Console, server logs if we have them, and a quicksite:yourdomain.com inurl:?search. We are looking for patterns, not perfection. - Sort them into three groups

Put each URL into one of these buckets, tracking, functional, or junk. Tracking URLs usually mean UTMs. Functional URLs usually change content, like sort or filter options. Junk URLs are things like session IDs, internal search results, and temporary paths. - Check whether the parameter adds real search value

If the answer is no, it should not be indexable. If the page is just a duplicate with a different tag attached, canonicalize it. If it is a utility page, keep it out of search with noindex or a redirect. - Decide the fix before making the change

Clean URLs first when possible. Canonical tags next. Noindex when the page should stay live but not rank. Redirects when the URL should not exist as a separate path. - Test the page after the change

Open the live URL, check the source, and confirm the canonical tag or noindex tag is in place. Then watch Search Console for a few weeks to see whether the parameter version drops out of indexing.

If we want this to become a habit, not a one-time cleanup, this belongs inside a regular technical SEO checklist. Small sites do better when we review these patterns on a schedule.

Common mistakes that keep causing trouble

Some parameter problems keep showing up because they feel harmless at first.

- We let every campaign link create a new indexable page. A newsletter UTM is fine in an ad or email, but it should not become a new search result.

- We block parameter URLs in robots.txt before adding a canonical or noindex tag. That can hide the very signals search engines need to see.

- We use multiple parameter orders for the same page, like

?color=blue&sort=pricein one place and?sort=price&color=bluein another. That creates unnecessary duplicates. - We keep internal search URLs live in the site architecture. Those pages help users on the site, but they rarely belong in search results.

- We redirect every parameter version to the homepage. That feels neat, but it often gives users the wrong destination and sends search engines mixed signals.

Good handling looks boring, and that is a good thing. A clean URL, one canonical version, and a clear rule for duplicates usually beat a pile of clever fixes.

For sites that are already heavy on filters, tagging, or campaign URLs, crawl waste becomes part of the problem too. Our crawl budget optimization guide explains why that matters, especially when a small site does not have pages to spare.

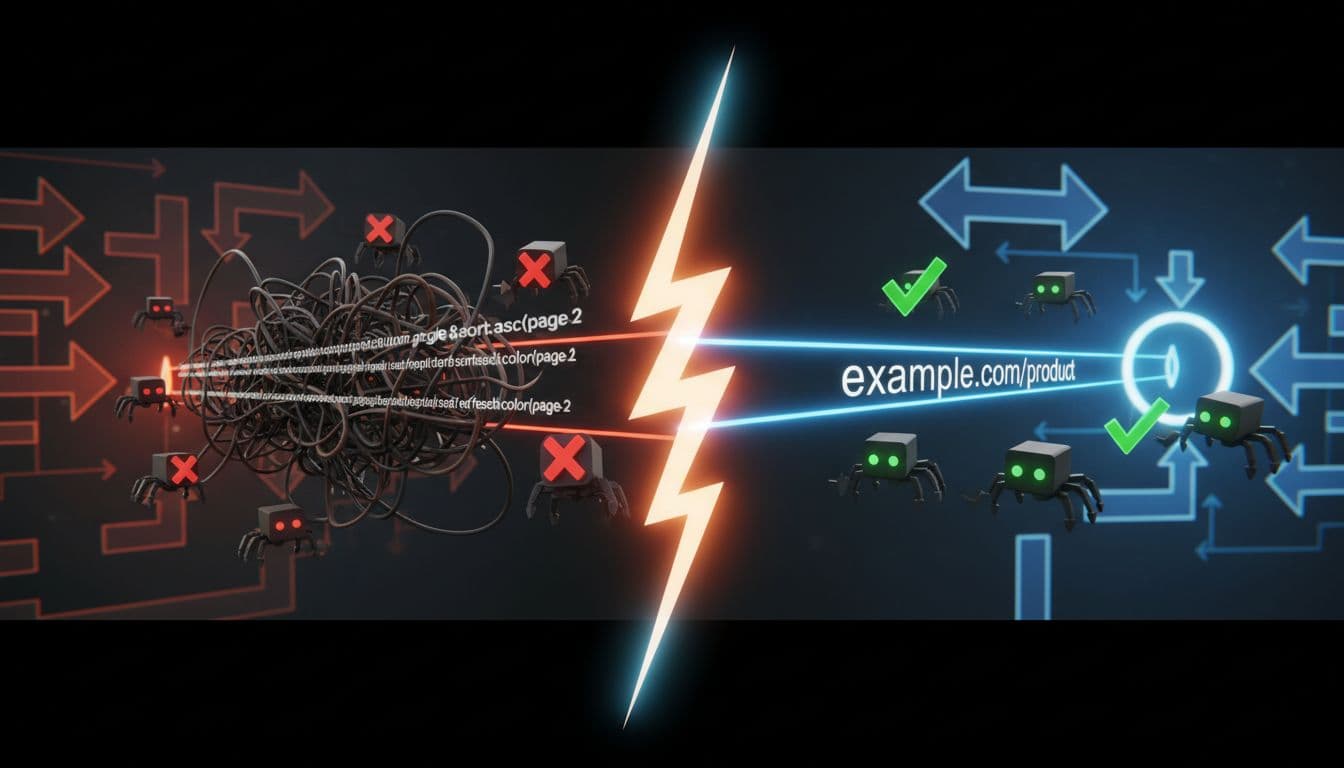

If we need a simple example, this is the difference:

- Bad:

/shoes?utm_source=instagram&utm_medium=social&sort=price - Better:

/shoes/in navigation, with UTMs only on campaign links - Bad:

/search?q=blue+shoes - Better: noindex the search results page, or keep it out of search entirely

That is not fancy work. It is just clean site management.

Keep the rules simple, and the site gets easier to manage

URL parameters are not the enemy. Unclear rules are the enemy.

For a small business site, the winning approach in 2026 is straightforward. Keep indexable URLs clean when we can. Canonicalize duplicates when the content is the same. Keep utility pages out of search. Use redirects when a URL should be retired. That gives search engines one clear path instead of five confusing ones.

The question mark in a URL only becomes a problem when we let it multiply without control. Once we decide which parameter pages matter, the rest gets much easier to handle.

A small site does not need a complicated parameter strategy. It needs a simple one, used consistently.