Google doesn’t want every URL we publish. In 2026, it still crawls a lot, but it stores fewer weak pages than many site owners expect.

That is the heart of index bloat seo. When too many thin, duplicate, filtered, or expired URLs sit in the index, our strongest pages lose clarity. The fix starts when we separate indexing problems from crawl waste and ranking problems.

What index bloat means, and what it does not mean

Index bloat happens when Google indexes more pages than our site truly needs. Those extra URLs often come from tag archives, faceted filters, internal search results, tracking parameters, old landing pages, and near-duplicate content.

A bloated index is like a file cabinet packed with copies, scraps, and drafts. The important files are still there, but they are harder to sort and trust. Google can spend time on the wrong URLs, and our best pages may compete with weaker versions.

Recent coverage, including Search Engine Land’s guide to index bloat and this beginner-friendly explanation from 4 SEO Help, lines up with what we see in audits. Google still makes a clear distinction between crawling and indexing. A page can be crawled and never stored, or indexed and still perform poorly.

If we need a quick refresher on the basics, our SEO indexing guide explains how discovery, indexing, and ranking connect.

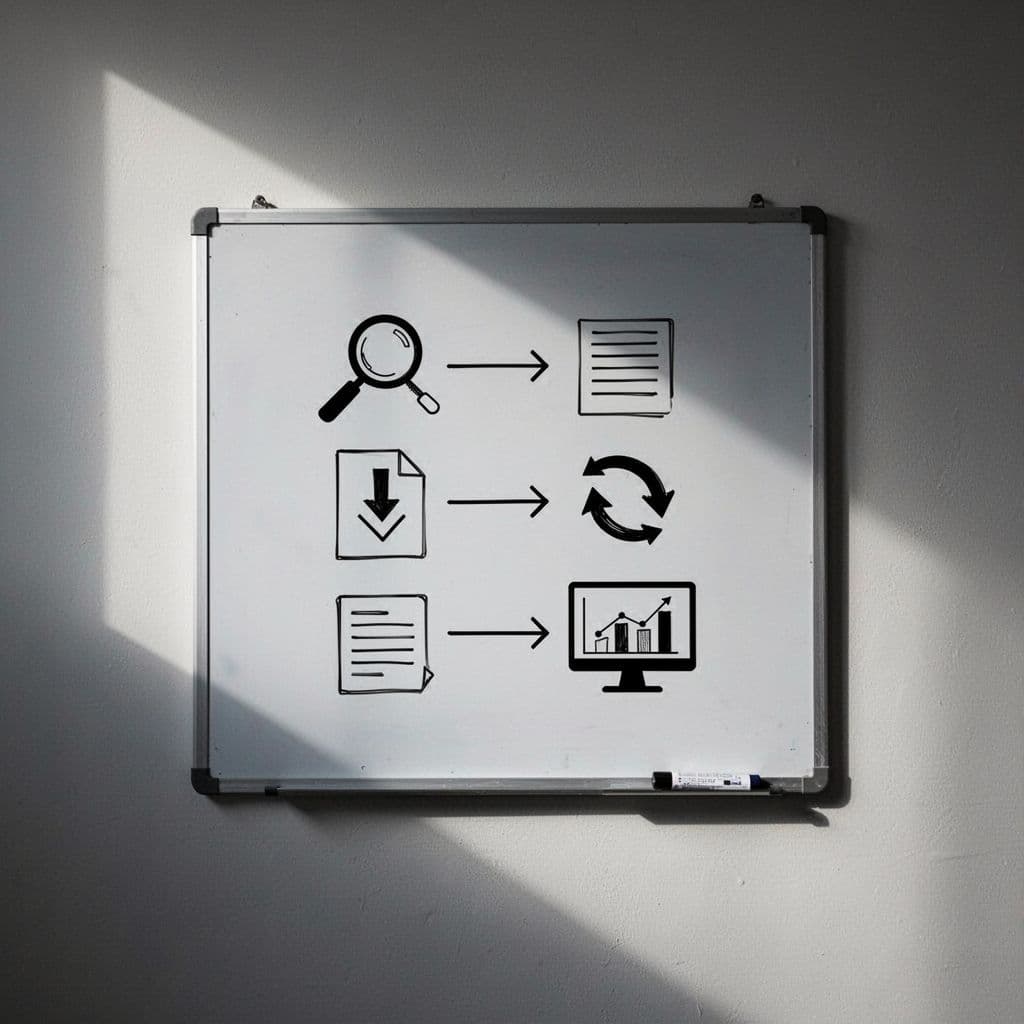

This quick comparison helps us label the problem correctly:

| Issue | What it means | Main fix |

|---|---|---|

| Index bloat | Too many low-value pages are already indexed | Remove, combine, or de-prioritize indexed junk |

| Crawl issue | Google spends time fetching the wrong URLs | Cut crawl waste and tighten site structure |

| Ranking issue | A good page is indexed but not competitive | Improve content, intent match, and authority |

If Google can crawl a page, it may still choose not to index it. That gap causes a lot of confusion.

The takeaway is simple. We fix faster when we know whether the problem is storage, discovery, or competition.

How to diagnose index bloat in 2026

Google Search Console is our first stop. We start with the Pages report, then compare indexed URLs with the pages that actually matter. If our sitemap lists 400 important URLs but Google reports several thousand indexed pages, that gap deserves a closer look.

Next, we inspect a sample of suspect URLs. We check whether the page is indexable, which canonical Google selected, whether a noindex tag exists, and whether the URL appears in the sitemap. That tells us if the issue is a template pattern or a one-off mistake.

After that, we run a full crawl with a site crawler such as Screaming Frog or Sitebulb. We want exports for indexable URLs, duplicate titles, duplicate content patterns, canonicals, parameters, and status codes. Then we match that crawl data with Search Console performance data.

Low clicks alone do not prove bloat. Some pages support conversions or internal navigation. What matters is value. Does the URL have a purpose in search, or is it only clutter?

Common patterns include:

- filter and sort URLs

- tag and author archives

- internal search pages

- print pages and session IDs

- old HTTP or trailing-slash variants

- thin local pages with only a few changed words

A site: search can help as a rough spot check, but it is not a full count. For URLs that seem stuck between discovery and storage, our guide to fix crawled not indexed pages can help with the next round of checks.

How to fix index bloat without hurting good pages

We should not delete pages at random. A safer method is to sort every questionable URL into five buckets: keep, improve, combine, hide, or retire.

Here is the checklist that works well for beginners:

- Improve pages with clear search value. If a page has backlinks, conversions, or solid topic fit, keep it and make it better. Add useful copy, tighten headings, and support it with stronger internal links.

- Use noindex for pages people may need, but search results do not. Good examples include thank-you pages, login areas, thin tag pages, and some filtered views. Keep these pages crawlable long enough for Google to see the directive. Our guide to use noindex without blocking crawlers explains the setup.

- Use canonicals for duplicate or near-duplicate versions. Parameter URLs, sort orders, tracking copies, and print pages often belong here. A canonical tells Google which version should carry the main signals. This guide to canonical tag for duplicate URLs covers the common cases.

- Use 301 redirects when an old page has a true replacement. Redirect expired products, outdated posts, or duplicate pages to the closest match, not to the homepage.

- Use robots.txt to reduce crawl waste, not to remove indexed URLs. This is where beginners often get tripped up. If we block a URL too soon, Google may never see the noindex tag on that page.

- Prune and consolidate thin content. Merge overlapping blog posts, weak service pages, and shallow location pages into stronger assets. Then update internal links, breadcrumbs, and XML sitemaps so our top pages get the clearest signals.

After the cleanup, we monitor Search Console for several weeks. A cleaner index often leads to faster re-crawling, better focus on key pages, and fewer duplicate headaches.

A smaller index is often a stronger one

Index bloat usually grows from templates, filters, and content habits, not one bad page. That is why a lasting fix depends on better rules, not a one-time purge.

When we keep only useful pages indexable, guide duplicates with canonicals, and retire weak URLs with care, index bloat seo becomes much easier to manage. The result is a cleaner index, clearer signals, and more room for our best pages to rank.